Exploring 5 Use Cases of AI in Construction Management

Dmytro Spilka·5 min

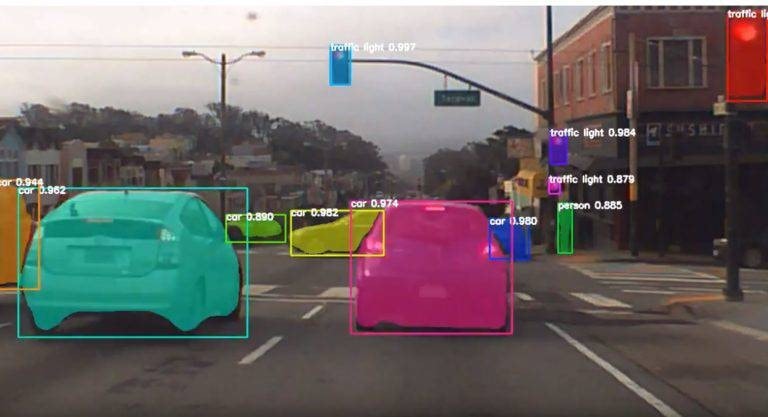

N.A, N.A. Object detection algorithm result. www.cbinsights.com, N.A, CB Information Services, Inc, OctoberPretty cool, right? However, si 31, 2018, https://www.cbinsights.com/research/startups-drive-auto-industry-disruption/.[/caption]

Pretty cool, right? However, sight alone isn’t enough to make an autonomous vehicle operate properly...

N.A, N.A. Object detection algorithm result. www.cbinsights.com, N.A, CB Information Services, Inc, OctoberPretty cool, right? However, si 31, 2018, https://www.cbinsights.com/research/startups-drive-auto-industry-disruption/.[/caption]

Pretty cool, right? However, sight alone isn’t enough to make an autonomous vehicle operate properly...

Sensor Fusion is when data from other sensors is integrated with the data from the cameras. Incorporating the different types of data allows the car to get a better understanding of its environment, similar to the way a human uses all of their senses to get a better sense of their surroundings.

There are usually five types of sensors on a self-driving car: Ultrasonic sensors, radar, cameras, LiDAR, and GNSS. Ultrasonic sensors, radar, and LiDAR, are all used to measure the distance to an object. The difference between those sensors is that the radar usually measures the distance to a metal object, and LiDAR measures the distance between cars, as well as providing the vehicle with 360° visibility. The GNSS sensor is the sensor that gathers all the data together and allows the car to process its surroundings..

Now, moving on to the next component...

Sensor Fusion is when data from other sensors is integrated with the data from the cameras. Incorporating the different types of data allows the car to get a better understanding of its environment, similar to the way a human uses all of their senses to get a better sense of their surroundings.

There are usually five types of sensors on a self-driving car: Ultrasonic sensors, radar, cameras, LiDAR, and GNSS. Ultrasonic sensors, radar, and LiDAR, are all used to measure the distance to an object. The difference between those sensors is that the radar usually measures the distance to a metal object, and LiDAR measures the distance between cars, as well as providing the vehicle with 360° visibility. The GNSS sensor is the sensor that gathers all the data together and allows the car to process its surroundings..

Now, moving on to the next component...

Localization is how a car identifies its location in the world. This is done using algorithms that use data from sources such as the GPS, landmark positions, and data from the sensor fusion stage. There is an initial estimate drawn from the GPS data, and then the movements of the car, landmark positions, and a combination of other data pieces help to create a final prediction of the location. The error range can be as little as 1 to 2cm.

Now, I know what you’re thinking. All of this is great and stuff, but will it still work in bad weather? The answer is yes! In inclement weather, cars can be localized using the Localizing Ground Penetrating Radar (LGPR).

After localizing, your current location is known, so all you have to do now is get to your destination!

Localization is how a car identifies its location in the world. This is done using algorithms that use data from sources such as the GPS, landmark positions, and data from the sensor fusion stage. There is an initial estimate drawn from the GPS data, and then the movements of the car, landmark positions, and a combination of other data pieces help to create a final prediction of the location. The error range can be as little as 1 to 2cm.

Now, I know what you’re thinking. All of this is great and stuff, but will it still work in bad weather? The answer is yes! In inclement weather, cars can be localized using the Localizing Ground Penetrating Radar (LGPR).

After localizing, your current location is known, so all you have to do now is get to your destination!

Path planning is pretty self-explanatory. It’s the component that charts the car’s path to its destination based on the information the sensors are providing. Using data from all the sensors makes it possible for the car to predict whether it needs to slow down, accelerate, change lanes, or stop altogether.

Another task done in the path planning stage is predicting the movements of vehicles in close proximity to avoid collisions. After the movements are predicted, the car decides which action is safe to make.

Finally, the most exciting component of all…

Path planning is pretty self-explanatory. It’s the component that charts the car’s path to its destination based on the information the sensors are providing. Using data from all the sensors makes it possible for the car to predict whether it needs to slow down, accelerate, change lanes, or stop altogether.

Another task done in the path planning stage is predicting the movements of vehicles in close proximity to avoid collisions. After the movements are predicted, the car decides which action is safe to make.

Finally, the most exciting component of all…

Control is the component that makes it possible for the vehicle to execute the course created in the path planning stage. It handles all the parts of driving that a human normally would, like turning the steering wheel, or hitting the brakes, etc.

This is where autonomous vehicles have an advantage over classical cars: they’re a lot more precise than a car operated by a human driver. This extra precision is what can make self-driving cars safer than classic cars. Most car accidents are caused by human error, so if the human is taken out of the equation by autonomous vehicles, there will be a lot fewer accidents.

Control is the component that makes it possible for the vehicle to execute the course created in the path planning stage. It handles all the parts of driving that a human normally would, like turning the steering wheel, or hitting the brakes, etc.

This is where autonomous vehicles have an advantage over classical cars: they’re a lot more precise than a car operated by a human driver. This extra precision is what can make self-driving cars safer than classic cars. Most car accidents are caused by human error, so if the human is taken out of the equation by autonomous vehicles, there will be a lot fewer accidents.

Ramandeep Saini is a writer who covers topics in emerging tech, such as artificial intelligence. She’s served as a consultant to companies such as Walmart Canada and Wealthsimple in the past, using her expertise in tech to guide them towards their corporate goals. In her free time, she runs an art blog and enjoys volunteering with local nonprofits.